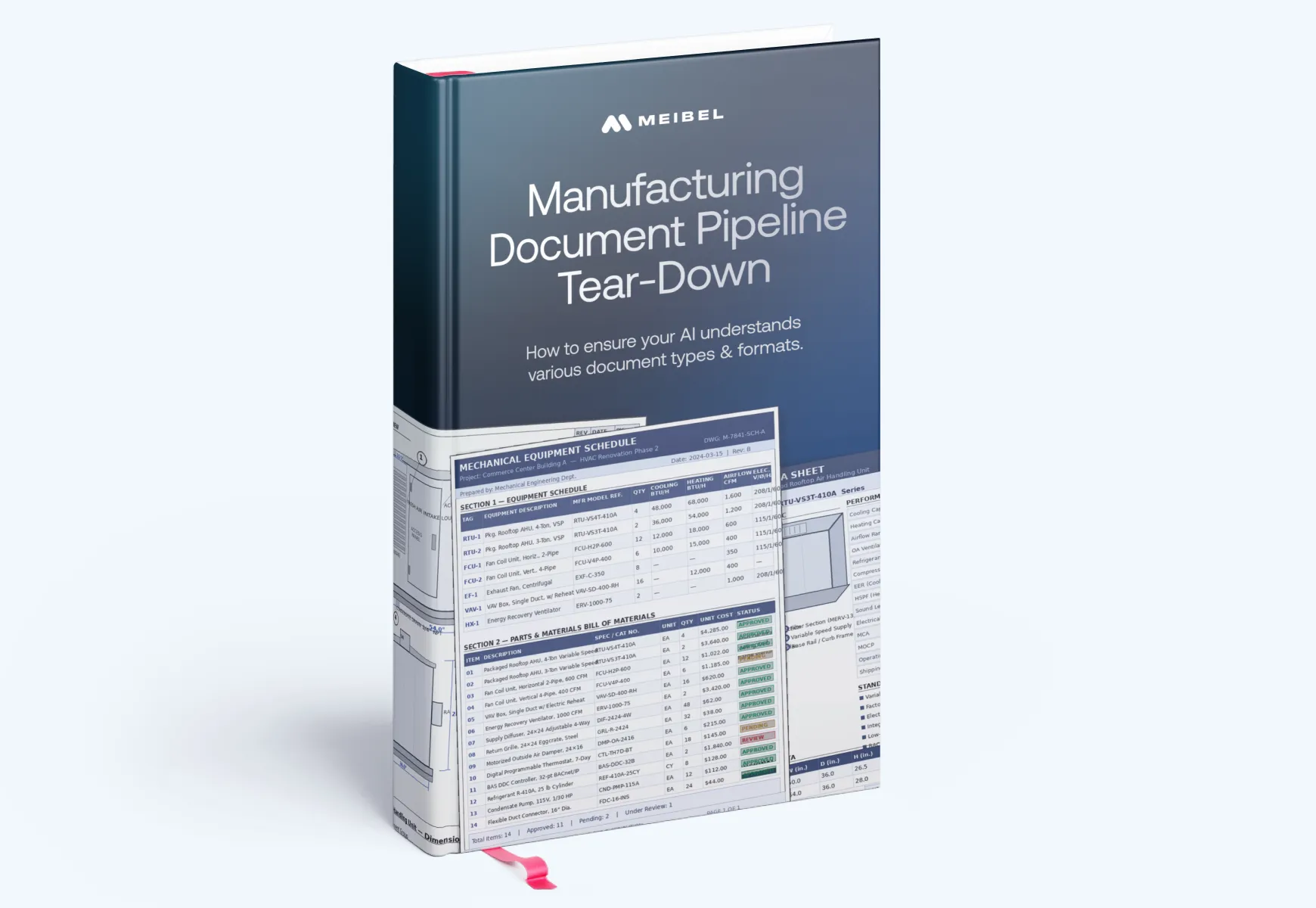

Whitepaper

Five failure modes that silently corrupt extraction, retrieval, and structured data, and the architecture built to stop them. A technical tear-down using a real 168-page fabrication specification.

Authored by:

If your pipeline feeds ERP, PLM, QMS, quoting, or compliance workflows, the stakes are too high for silent failures.

Standard OCR + RAG stacks are tricky when it comes to versatile doc formats. This tear-down shows exactly where they break on real industrial documents and what architecture prevents it.

You've patched the pipeline with manual review, exception queues, and spot checks. This report shows what a production-grade replacement actually looks like.

If you process submittals, specs, inspection packages, or supplier documents at volume, your error rate is higher than you think. Here's the evidence and the fix.

Why early AI wins stall, where teams get pulled into the engineering trap, and what it takes to scale AI without adding more manual review, more infrastructure burden, or more production risk.

You didn't set out to build a document review team. But here you are, because the "automated" pipeline keeps needing humans to catch what it missed. This study is for you, if...

This isn't a product brochure. It's a documented failure analysis, using a real fabrication specification, that shows where standard extraction pipelines break and what a production-grade alternative requires.

Try Meibel

Empowering engineering and product teams of any size to quickly build, run and scale Explainable AI Experiences.

RAG retrieves text by semantic similarity. It finds passages that look related to your query. But technical documents aren't organized by semantic proximity. Requirements reference test protocols. Tolerances live in footnotes that govern table rows three pages away. Specs point to addenda that override earlier clauses. RAG can't follow those relationships. It returns fragments that look right but miss the constraints that matter.

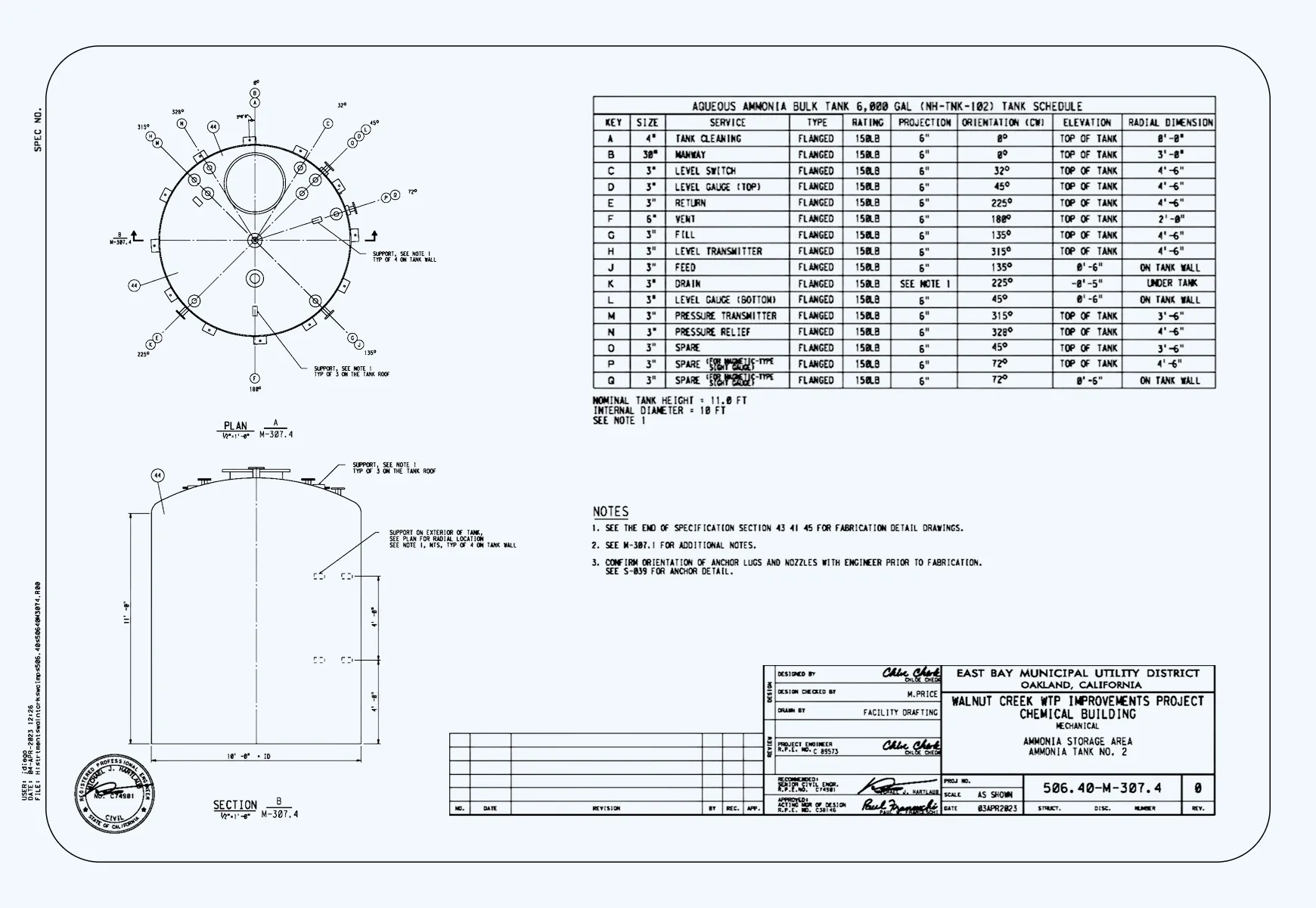

Standard OCR captures text but loses structure. A laminate schedule comes back as a string of numbers with no row or column context. A weld procedure table loses the relationship between joint type, filler material, and preheat requirements. A dimensional tolerance gets extracted but separated from the part it governs. The characters are there. The engineering meaning is gone. And because the output looks complete, nothing flags the failure before it reaches your ERP or QMS.

It catches some errors. But it doesn't scale, and it doesn't tell you your actual error rate. When a reviewer scans a submittal summary, they're checking for obvious problems, not verifying that every extracted value maps back to the correct row in the correct revision of the correct drawing. At volume, across hundreds of supplier submittals, inspection packages, and material certifications, manual review becomes the bottleneck that limits how many bids you can process, not a quality control mechanism.

Because the answer is rarely in one place. A hydrostatic test requirement references a resin system specified in a different section. A coating spec cites an ASTM standard that lives in a separate document. A dimensional requirement in the body of the spec is modified by a footnote two pages later. Standard retrieval finds the passage closest to your question. It doesn't follow the references that complete the answer. So you get part of the requirement, with nothing to tell you the rest is missing.

You define the fields your workflow needs as a fixed schema, the same fields your ERP, PLM, or QMS expects, regardless of how any individual supplier has laid out their submittal. The system extracts into that schema whether the source is a structured data table, a prose paragraph with embedded tolerances, a multi-column laminate schedule, or a hand-annotated drawing. Supplier abbreviations are resolved using legends found in the document set. Units are normalized between metric and imperial. The output is consistent even when the inputs look nothing alike.

Every extracted value, whether it's a bolt torque specification, a resin system designation, a hydrostatic test pressure, or a cure cycle requirement, maps to an exact location in the source document. When a value gets questioned during inspection, audit, or a supplier dispute, you click the citation and land on the exact page, with the relevant region highlighted. You're not searching a 200-page fabrication spec hoping to find the same phrase. The provenance is built into the output from the moment of extraction.

That's the most important question in this report. Most pipelines don't produce a verifiable error rate because most extracted values aren't traced back to a source location. If you can't link an extracted torque spec or material grade to the exact line in the exact document it came from, you can't audit it, you can't measure it, and you can't improve it. What you have is automation that produces outputs that look authoritative. Whether they're correct is a separate question, and without source tracing, it's one you can't answer with confidence.

No. The failures this report documents happen before the LLM sees the document. When OCR flattens a table into a text stream, the structure is already gone. When a cross-reference isn't traversed at retrieval time, the LLM never gets the second half of the answer. Better prompts can't recover structure that wasn't preserved at ingestion.