Free Webinar

Your team was hired to build products, not AI infrastructure. In this 45-minute webinar, we’ll show you the three core capabilities that help teams spend less time wiring up AI and more time shipping.

This webinar is for teams that have already proven AI can work. Now they need it to work reliably, repeatedly, and under real production conditions.

You are connecting models, data, retrieval, prompts, and workflows into systems that have to survive real traffic and messy inputs. You need less prompt sprawl and more control.

You are trying to ship real AI outcomes without turning your team into an internal infrastructure shop. You need speed, structure, and a way to scale without losing visibility.

You are funding AI, setting priorities, and managing risk. You need to know where governance belongs, how trust is measured, and what it takes to move from demo value to operational value.

Why early AI wins stall, where teams get pulled into the engineering trap, and what it takes to scale AI without adding more manual review, more infrastructure burden, or more production risk.

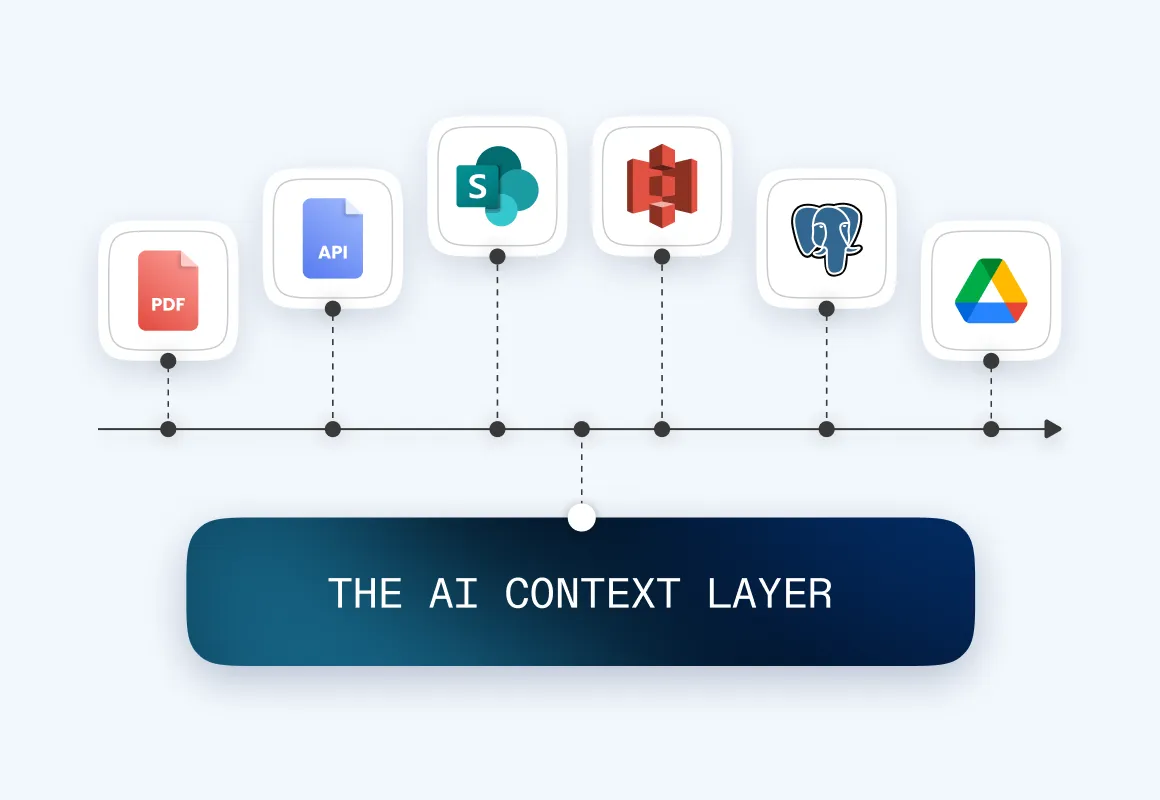

Once teams try to run AI against real documents, real workflows, and real operational constraints, this is when gaps show up. Context becomes inconsistent. Retrieval gets noisy. Prompts multiply. The system becomes harder to explain, harder to govern, and harder to trust. This is where many teams lose momentum. They start by trying to solve a business problem, then end up building AI infrastructure instead. We’ll cover how to:

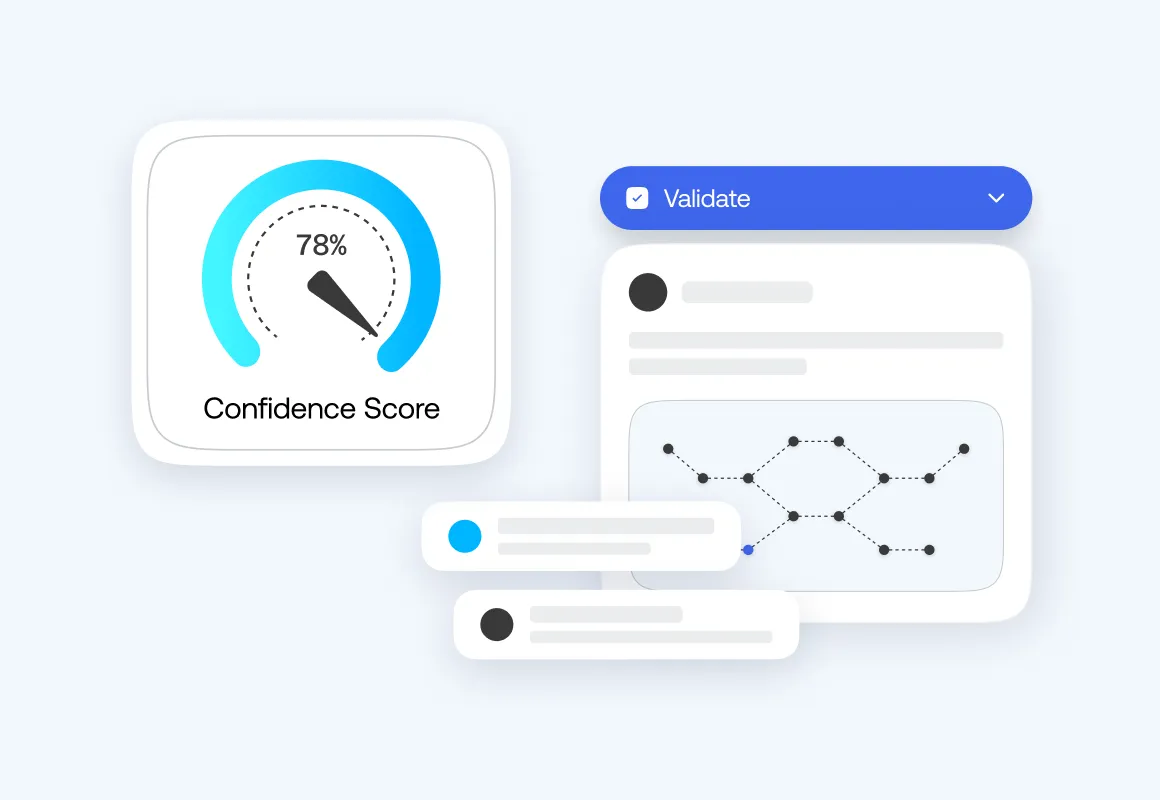

If you cannot evaluate whether an answer is grounded in the right data, appropriate for the task, and reliable enough to act on, then you do not have a production system. You have a prototype with risk attached to it. Confidence scoring changes that. It gives teams a way to assess uncertainty across ingestion, extraction, retrieval, and final output, so they can decide what should be automated, what needs review, and where the system needs improvement.

If your team has proven AI can work and now needs it to work in production, yes. It is built for engineers, AI leads, product owners, and technical executives trying to move from pilot to reliable deployment.

Three things: context, control, and confidence. How to get the right data to the model, how to govern workflow behavior, and how to measure whether outputs are safe to trust in production.

Yes. We will show real production journeys from teams that moved past early AI wins, hit complexity, and found a path to scale.

It is the gap between a prototype that works and a production system that holds up under real conditions. The model is rarely the issue. Retrieval, workflow control, and output validation usually are.

It is when your team stops shipping product and starts building AI infrastructure. Prompt libraries, pipelines, monitoring layers, and validation logic. The work grows, and the roadmap slows down.

It measures whether an output is grounded, reliable, and fit for the task before it reaches production. The goal is not just probability. It is a trust you can act on.

Context engineering is how you prepare and retrieve data so AI can use it reliably at runtime. When context is weak, output quality drops fast.

Yes. Everyone who registers will receive it after the session.